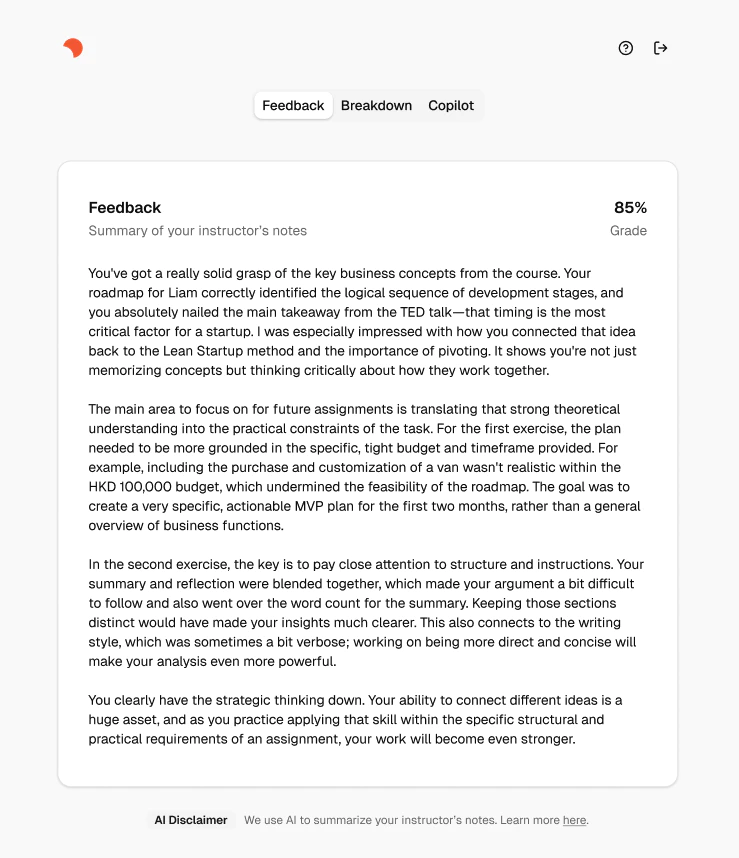

Li, Y., Shan, Z., Raković, M.

et al. When AI explains in natural language: Unveiling the impact of generative AI explanations on educators’ grading and feedback practices.

Educ Inf Technol (2025) |

View articleHenderson, M., Bearman, M., Chung, J., Fawns, T., Buckingham Shum, S., Matthews, K. E., & de Mello Heredia, J. (2025). Comparing Generative AI and teacher feedback: student perceptions of usefulness and trustworthiness.

Assessment & Evaluation in Higher Education, 1–16 |

View article